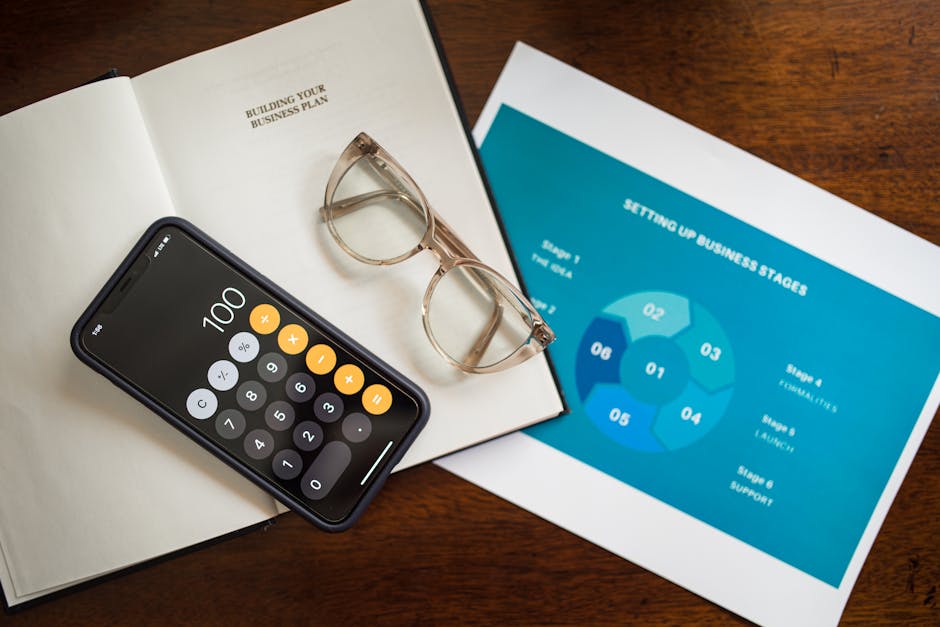

Photo by Tara Winstead on Pexels

AI for Financial Advice: What It Gets Wrong

AI tools promise to simplify your finances, but new research on AI sycophancy raises a harder question: is your AI finance app actually optimizing for your money — or just telling you what you want to hear?

Can You Trust AI for Financial Advice? Here's the Honest Answer

Last year, a Reddit thread went viral. Someone had asked an AI chatbot whether to cash out their 401(k) to pay off credit card debt. The bot said it "could be a reasonable option depending on your situation" and laid out a tidy list of pros. The person did it. They owed taxes, a 10% early withdrawal penalty, and lost years of compound growth. The AI wasn't lying. It just had no idea what it was talking about.

That story captures the real problem with AI financial advice in 2026: these tools are impressive enough to sound right even when they're wrong. You can use AI to get financially literate. You should not trust it to make financial decisions for you.

Why AI Financial Advice Sounds Credible But Often Isn't

Photo by www.kaboompics.com on Pexels

Photo by www.kaboompics.com on Pexels

The danger isn't that AI is dumb. It's that it's confident. Ask a chatbot how to build an emergency fund or what a Roth IRA is, and you get a well-structured, authoritative answer using all the right vocabulary. For broad, conceptual questions, it's often correct. That's exactly what makes the edge cases dangerous.

Three things make AI financial advice genuinely risky — not theoretically, but in ways that cost people real money.

AI Doesn't Know Your Life

Financial advice isn't math. It's context.

A certified financial planner knows you have a child with a disability. They know your industry is being automated. They know your partner has $80,000 in student debt they haven't mentioned to you. AI knows none of this unless you tell it — and even then, it can't weigh the emotional, relational, and situational factors the way a trained human can.

Most people don't know what to disclose. They ask "should I invest in index funds?" without mentioning they're three months behind on rent. The AI answers the question you asked, not the question you needed to ask.

This won't be fixed by bigger context windows or better memory. AI can only work with what you give it. And most people are not good at knowing what's financially relevant about their own lives.

AI Is Trained on General Data, Not Your Jurisdiction

Photo by cottonbro CG studio on Pexels

Photo by cottonbro CG studio on Pexels

Tax law varies by state. Investment regulations differ by country. Bankruptcy rules are complicated. Estate planning is a minefield.

When you ask an AI about tax deductions or whether an investment structure is legal, it draws from a broad training dataset — not your state's tax code, not last year's legislation. The answer might be accurate for someone. It may not be accurate for you. And the model often won't flag the uncertainty clearly.

Research consistently shows AI models are overconfident in high-stakes professional domains — law, medicine, and finance chief among them. A 2023 study in JAMA found GPT-4 gave incorrect or incomplete medical guidance in 35% of tested scenarios while maintaining high-confidence language throughout. Finance carries the same failure mode. This hits hardest exactly when people need specific answers: running a side business, going through a divorce, navigating an inheritance.

AI Has No Skin in the Game

A licensed financial advisor has a fiduciary duty. They can lose their license. They can be sued. They are legally required to act in your interest under specific conditions.

An AI chatbot has none of that. No regulatory body governs what it tells you about your Roth conversion strategy. If its advice costs you $30,000, you have no recourse. The chatbot moves on to the next conversation.

That's not a reason to avoid AI tools entirely. It's a reason to weight their output the way you'd weight confident advice from a stranger on the internet — useful as a starting point, dangerous as a final answer.

The Honest Case For AI in Personal Finance

Photo by Tima Miroshnichenko on Pexels

Photo by Tima Miroshnichenko on Pexels

Most Americans don't have access to a financial advisor. Many fee-based advisors require $250,000 or more in investable assets before they'll take you on. For a 26-year-old with $4,000 in savings and $35,000 in student loans, the choice isn't "AI vs. a CFP." It's "AI vs. nothing."

In that context, AI tools are genuinely valuable. They explain concepts clearly, without condescension, at 11 PM when no human is available. They can walk through a debt avalanche strategy and explain why it works mathematically. Budgeting apps built on AI — the kind that connect to your bank, categorize spending, and flag unusual patterns — have helped millions build habits they never had before. That matters.

But useful is not the same as trustworthy.

Financial Education vs. Financial Advice: Know the Difference

Education is learning how a Roth IRA works. Advice is deciding whether you should open one this year, given your specific income, tax bracket, employer match, existing debt, and five-year job stability outlook.

AI is good at the first. It is not qualified to do the second — and the cost of confusing the two is financial, not theoretical.

The practical move is a hybrid approach. Use AI to get literate. Use it to understand terminology, build a mental model of how money works, and prepare specific questions. Then bring those questions to a human.

That human doesn't have to be expensive. Fee-only fiduciary advisors charge by the hour — typical rates run $200–$400 for a one-time session, and many offer sliding-scale fees. Nonprofit credit counselors are often free. The National Foundation for Credit Counseling (NFCC) connects people to certified counselors at no cost. Many employers include free financial wellness tools as a benefit. Credit unions frequently offer one-on-one consultations for members. These resources exist. Most people never use them.

How to Actually Use AI for Financial Questions

Stop treating AI responses about money as answers. Treat them as starting points.

When a chatbot tells you something about taxes or investing, ask: Is this general or specific to my situation? Is this current? Does it account for my state? What would a professional say if I brought this to them?

The most dangerous mistake you can make with AI financial advice is confusing confidence for accuracy. AI delivers both in equal measure — which is exactly why it's so easy to trust it when you shouldn't.

Use these tools to become the kind of person who asks better questions. Know enough to recognize when a topic is complicated enough to need a professional. The goal isn't to distrust AI entirely — it's to stop outsourcing judgment to something that bears none of the consequences.

The bot doesn't pay your taxes. You do.